Last Spring during the process of work-shopping ideas for the beyondAEC Hackathon, the concept for an office-against-office design technology competition emerged. Coincidentally the 2017 Greenbuild International Conference and Expo collocated with the Architecture Boston eXpo (ABX) -- an annual AEC industry conference hosted by the Boston Society of Architects (BSA). What a perfect venue for exhibiting expertise in the Greater Boston community through a live competition on full display of the public! After connecting with Billy Craig from the BSA and Karen Young from Informa, both organizers absolutely loved the idea and set out to bring it to life.

The Design Technology Throwdown was born and the rules were simple:

DTT is a first-of-its kind competition where teams will face off to see who can design the best solution to a mutual design problem. A champion will be crowned for the best use of contemporary technology to develop, document, and validate their design. In concordance with Greenbuild's mission of sustainability, extra consideration will be given to projects that incorporate environmental analysis and sustainable design strategies. So assemble a team of 4 to represent your organization and register to throw down against Boston's best!

A panel of expert judges was procured:

Nirva Fereshetian

Chief Information Officer

CBT Architects

Jordan Goldman

Engineering Principal

ZeroEnergy Design

Michael Kyes

Architectural Team Leader

SMMA

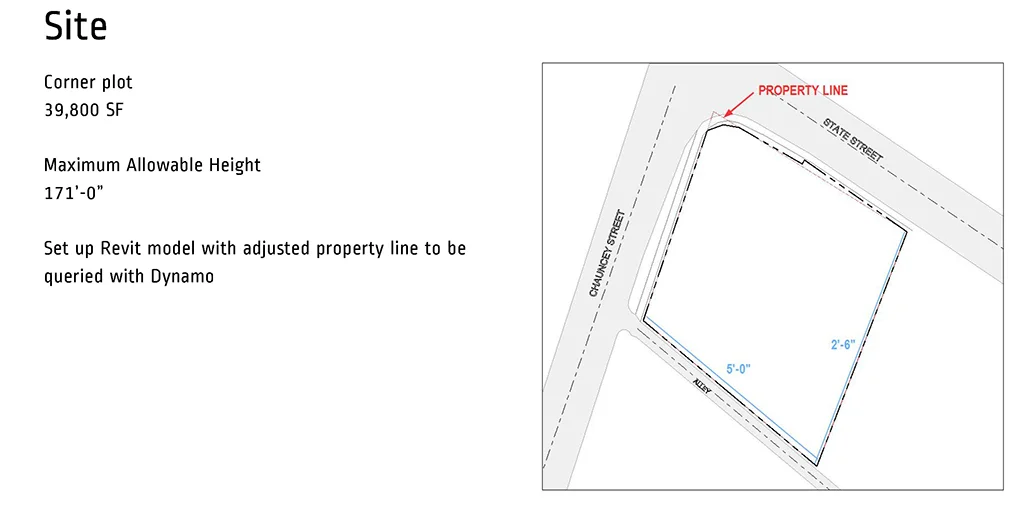

Four brave teams registered to answer the challenge and 24 hours before the start of the event, a design brief outlining all the particulars of the site was distributed.

Arrowstreet

Edbert Cheng, Gaby Baierle-Atwood,

Katherine Chin, Tristan McGuire

CBT Architects

Rood Vincent, Lynn Quagliato,

Anthony Polidoro, Marwan Ghabour

Elkus Manfredi Architects

May Dussadeevutikul, Alex Nunes,

Nensi Karanxha, Sherry Li

Microdesk

Eric Rudisaile, Jackie Doak,

Angela Doyle, Mike McGovern

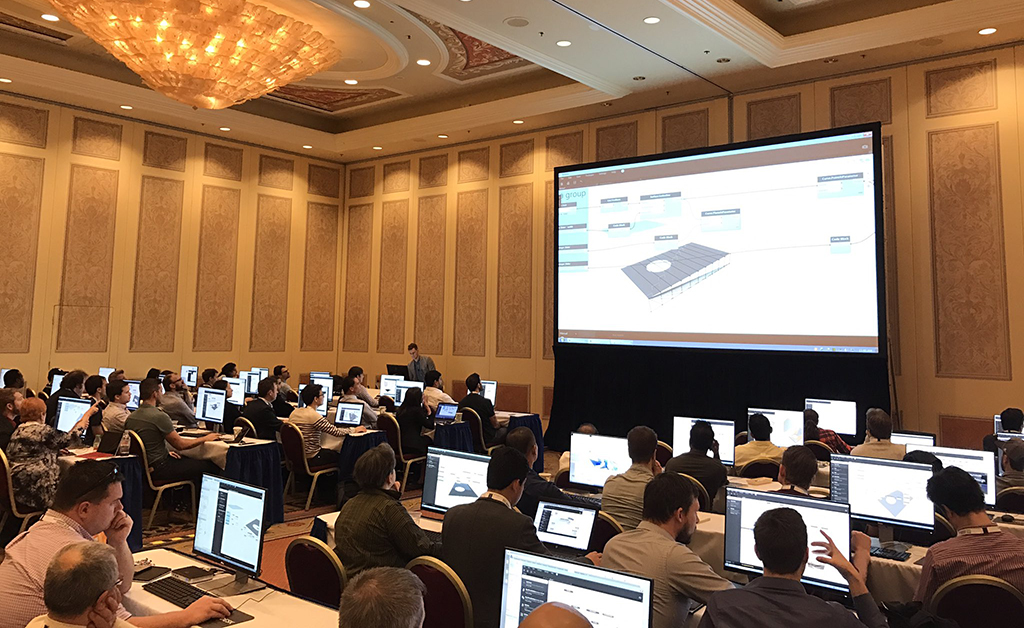

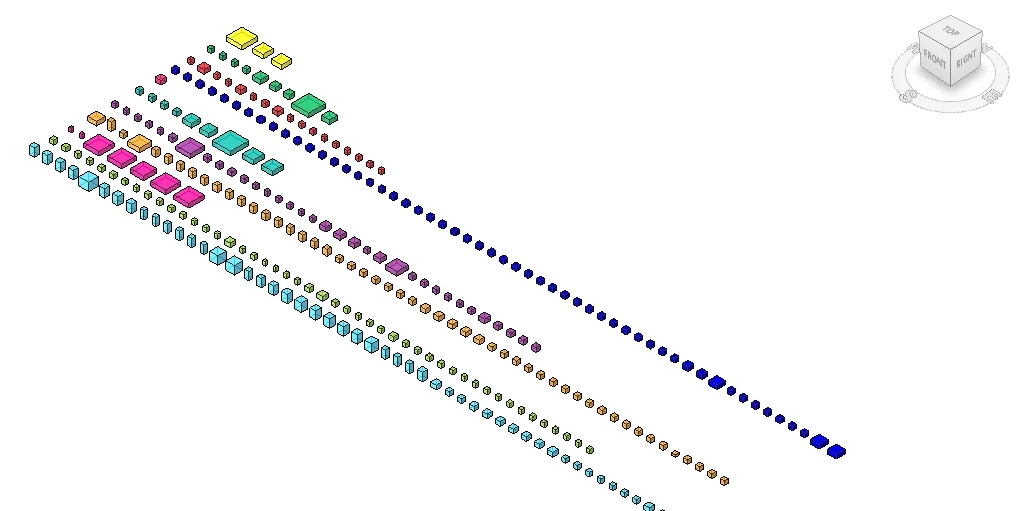

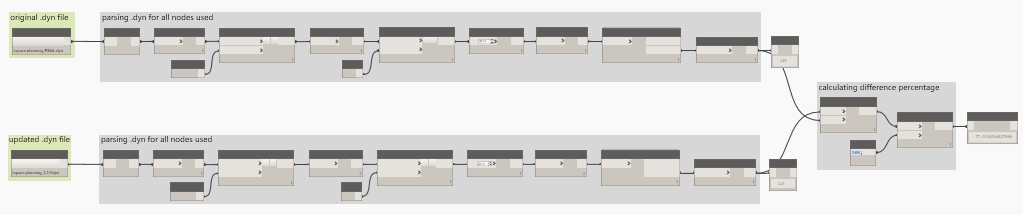

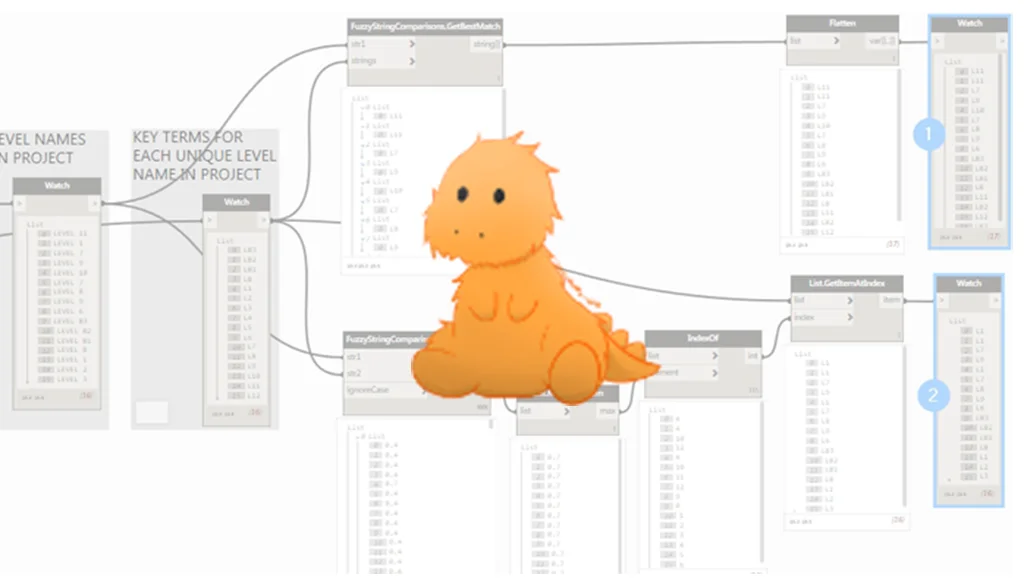

On Thursday, November 9th the competition kicked off with a 4 hour production period. Each team got right down to business developing their collective responses to the site.

Following the production portion, each team had 5 minutes to present their designs with judges asking clarifying questions after every pitch. Watch the entire recap and thumb through the accompanying slide decks for each team:

After every team had presented, the judges deliberated and one winner was chosen -- Arrowstreet!

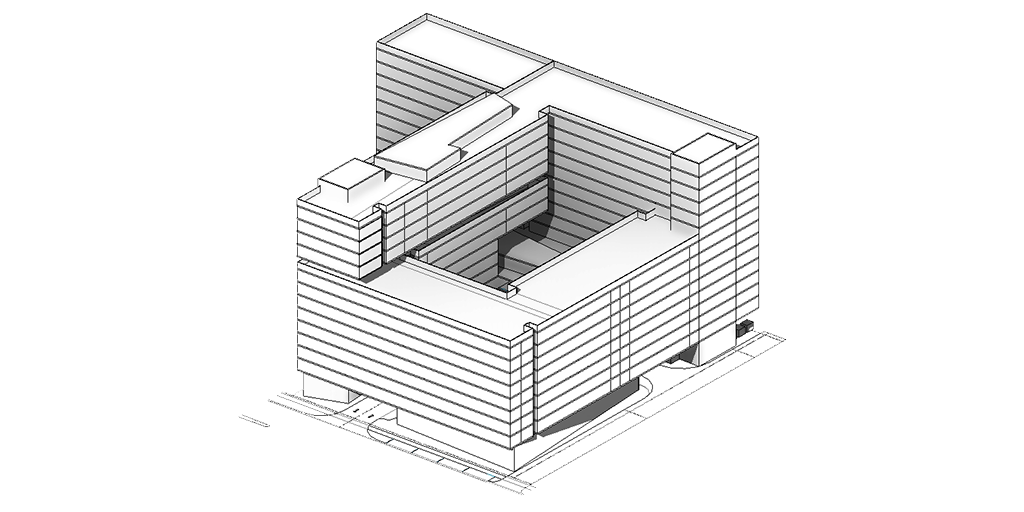

"[The winning project]... was called Place to Grow, a proposed live+work building with tiers for urban farming, allowing residents to grow their food and have a farm-to-table market and public space on the ground level."

-- Gabriela Baierle-Atwood, AIA, Arrowstreet

Brad Prestbo (DTT organizer), Edbert Cheng, Gaby Baierle-Atwood, Katherine Chin, Tristan McGuire, Kyle Martin (DTT organizer)

Reflecting on the Design Technology Throwdown, it was a pleasure to watch the different ways that both individuals and offices work. Equally as fascinating as the contrast of workflows and approaches across offices, there were numerous similarities amongst the design ideas, tools, and various skillsets of the participants. I truly enjoyed seeing the local community come together, recognize, and celebrate the amazing surge in design technology that is currently taking place in the AEC industry. As a persistent evangelist of evolving digital tools, I am inspired to continue to organize events like the Design Technology Throwdown to further encourage knowledge sharing, embrace common expertise, and elevate the future of the industry in solidarity.

Several weeks following Greenbuild/ABX 2017, the trophy arrived in the mail and was presented to Arrowstreet.

Photo credit: Sara Garber, Arrowstreet

A huge thanks goes out to the Informa team -- Karen Young, Shelby Snelson, and Anna Lehman -- as well as Billy Craig from the Boston Society of Architects, without their support this event would not have happened. I also would like to thank my co-organizer Brad Prestbo (Sasaki), and expertise volunteers Peter Atwood (design technology educator) and Daniel Fink (MIT/Placeful.io). We appreciate all three distinguished judges who volunteered their valuable time to provide insightful criticism and perform the challenging task of picking a winner. Lastly, thank you to the hard-working participants and congratulations to the Arrowstreet team!